The Story of the Evil Assert Operator

Today I am going to talk about the evil side of the Assert Operator. To start out, let me introduce this operator to you:

The Assert Operator

The Assert Operator is used by SQL Server in execution plans that require some condition to be satisfied to be valid.

A common scenario in which an Assert Operator is used is an insert into a table that has a check constraint defined. Take for example the dbo.TableWithCheckConstraint table:

Id INT PRIMARY KEY CLUSTERED,

Even INT CONSTRAINT TableWithCheckConstraint_Even_Check CHECK (Even % 2 = 0)

);

[/sql]

If you run this simple insert against that table

SELECT n, 2*n FROM (VALUES(1),(2))X(n);

[/sql]

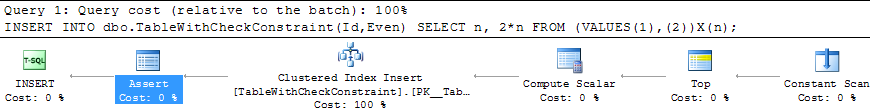

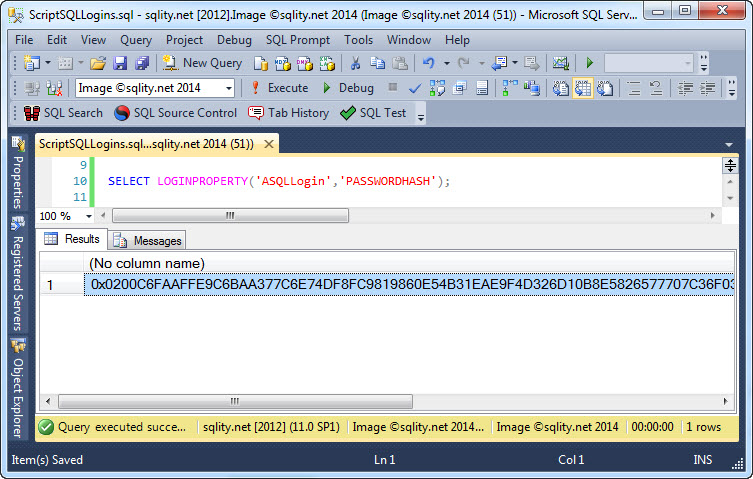

you will get an execution plan that looks like this:

The properties of the highlighted Assert Operator are shown below:

Asserts in general are very cheap operators as you can see when you look at the Estimated Operator Cost in above image. An Assert operator works by calculating a given expression. If that expression results in anything other than NULL, the assert fails and stops the execution of the current statement. The expression in above example is the formula we used for the check constraint itself, wrapped in a case statement to return either NULL or 0.

The Dark Side of the Assert

So, how can such an innocent little operator be evil?

Sometimes SQL Server requires a certain condition to be satisfied in situations where you do not necessarily expect it. The most common example is a sub-query in the column list or the WHERE clause of a select statement as shown in the following example:

FROM (VALUES (1), (2) ) X(m)

);

[/sql]

If you execute this query you get this error:

Msg 512, Level 16, State 1, Line 1 Subquery returned more than 1 value. This is not permitted when the subquery follows =, !=, <, <= , >, >= or when the subquery is used as an expression.

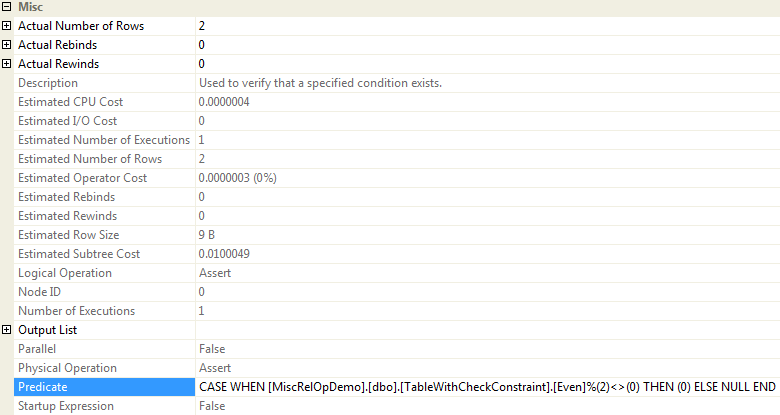

To enforce that rule, SQL Server is using an Assert Operator again:

In above example the Stream Aggregate Operator is tasked with counting the rows returned:

[Expr1005] = Scalar Operator(Count(*))

The Assert then makes sure that this count is at max one with the following predicate:

CASE WHEN [Expr1005]>(1) THEN (0) ELSE NULL END

(If you look more closely you will find that the Stream Aggregate actually calculates two aggregates in this query and that the second one is of a surprising type. Check out this article by Paul White for an explanation.)

In this example the Stream Aggregate and the Assert make up together 50% of the (estimated) query cost, but as this is a very made-up example I would not pay to much attention to that. So let us look at a real life example.

The Forgotten Uniqueness

First we need a few tables:

Id INT PRIMARY KEY CLUSTERED,

Sort1 INT UNIQUE,

Luv INT,

Data CHAR(1000)

);

DECLARE @BigSize INT = 1000000;

DECLARE @LookupSize INT = 1000;

INSERT INTO dbo.AssertBigFact(Id,Sort1,Luv,Data)

SELECT n,(n*1123)%@BigSize+1,n%@LookupSize+1,'' FROM dbo.GetNums(@BigSize)

CREATE TABLE dbo.AssertLookupNonUnique(

Id INT ,

Val INT

);

CREATE CLUSTERED INDEX AssertLookupNonUnique_CI ON dbo.AssertLookupNonUnique(Id);

CREATE TABLE dbo.AssertLookupUnique(

Id INT ,

Val INT

);

CREATE UNIQUE CLUSTERED INDEX AssertLookupUnique_CI ON dbo.AssertLookupUnique(Id);

INSERT

INTO dbo.AssertLookupNonUnique(Id,Val)

OUTPUT INSERTED.Id,INSERTED.Val

INTO dbo.AssertLookupUnique(Id,Val)

SELECT n,n%37 FROM dbo.GetNums(@LookupSize);

[/sql]

As most of my examples, this one is also using Itzik's GetNums function that you can get here: Virtual Auxiliary Table of Numbers.

It creates three tables, the table dbo.AssertBigFact that contains 1,000,000 rows and the two small 1,000 row lookup tables dbo.AssertLookupUnique and dbo.AssertLookupNonUnique. The insert statement uses the OUTPUT clause to insert the same rows in both tables, so with the exception of the clustered index on the dbo.AssertLookupNonUnique table that got "accidentally" not declared as unique, these two tables are identical.

Now let us compare the following two almost identical queries. They only differ in the lookup table they go against.

SET STATISTICS TIME ON;

GO

SELECT b.*,(SELECT Val FROM dbo.AssertLookupNonUnique l WHERE l.Id = b.Luv) Val

FROM dbo.AssertBigFact b

ORDER BY b.Sort1,Val;

GO

SELECT b.*,(SELECT Val FROM dbo.AssertLookupUnique l WHERE l.Id = b.Luv) Val

FROM dbo.AssertBigFact b

ORDER BY b.Sort1,Val;

GO

SET STATISTICS TIME OFF;

SET STATISTICS IO OFF;

[/sql]

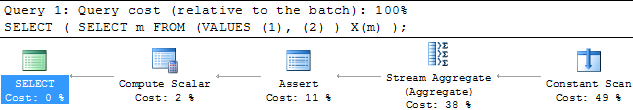

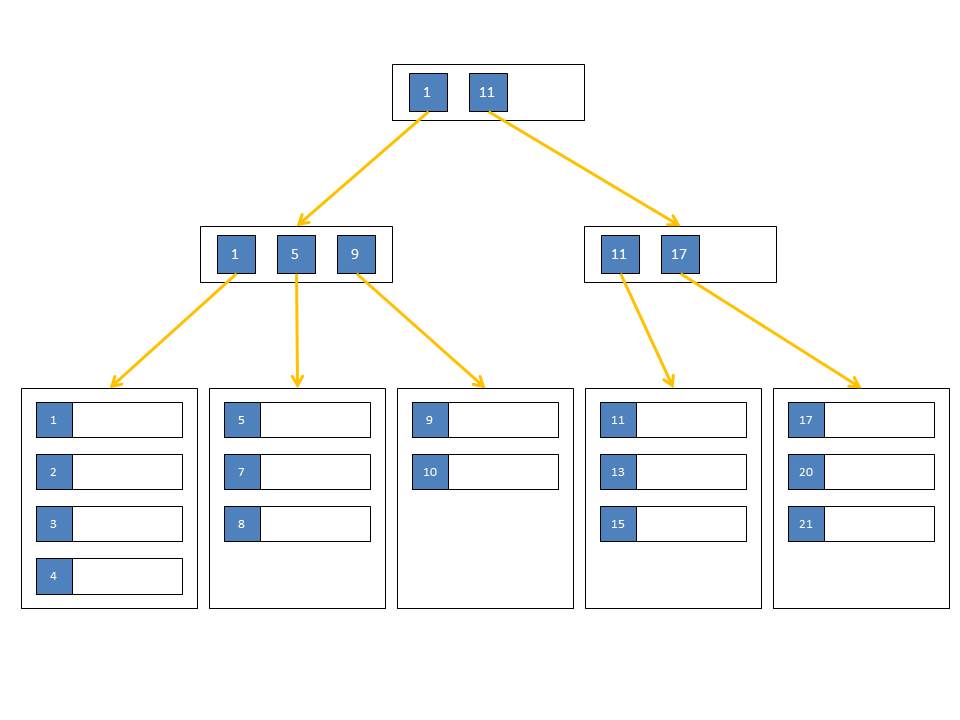

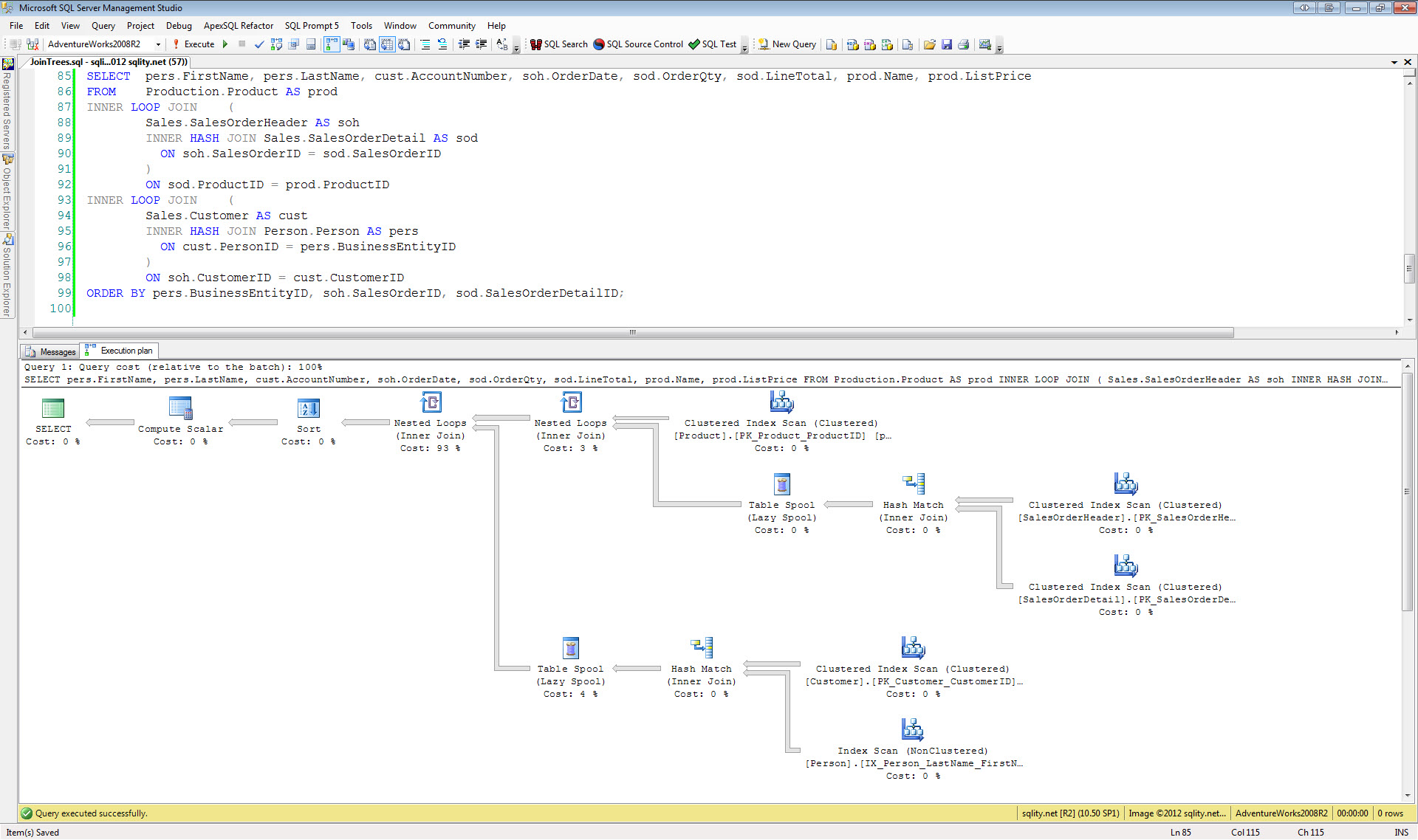

The execution plans for above queries look like this:

The top version, going against the non-unique table, requires the use of an Assert Operator. The second query on the other end can get away without in, as SQL Server knows that the sub-query can return only a single row because the Id column was declared unique in the definition of the clustered index.

In a scenario like this where a rather large table gets joined with a small table, using a hash join is usually the fastest way for SQL Server to execute the query. A hash join requires the entire first table to be stored in a hash table in memory before any row can be returned. A hash can spill to tempdb if there is not enough free memory available, but such a spill is expensive. So hash joins are perfect in a scenario where one of the two join partners is small.

In our case SQL Server chose the hash join for the second query, as expected. However the first query uses a loop join. The reason for this is quite simple: SQL Server does not know that the Id column in the dbo.AssertLookupNonUnique table is unique, so it needs to assert that for each Luv value in the dbo.AssertBigFact table there is only one row in the dbo.AssertLookupNonUnique table with a matching Id value. For that it needs to read the dbo.AssertBigFact table first, as there might be id values in the dbo.AssertLookupNonUnique table that do not have a matching Luv value in the dbo.AssertBigFact table. If those Id values were duplicated it would not matter for this query, so the Assert can run only against Id values with a matching Luv value. That however means, that SQL Server can't build the hash table first, at least not with the small dbo.AssertLookupNonUnique table. But it doesn't make sense to build the hash table using the dbo.AssertBigFact table as that would take to much time and memory and would therefore be rather ineffective.

A merge join is not a good choice either, as it requires both inputs to be sorted on the join column which is not the case. SQL Server could insert a sort before the join, but that would mean that the full one million rows had to be sorted twice as a different sort order is requested by the query – a scenario worth avoiding.

That means that SQL Server has to use the loop join algorithm. That means it has to assert that there is only a single row in the dbo.AssertLookupNonUnique for each row in the dbo.AssertBigFact table which in turn means that the Stream Aggregate and Assert Operator pair gets executed 1,000,000 times. While each execution is small and cheap, doing a million of them is not. But this is not even the worst problem with this query.

Wasted Logical Reads

If we look at the logical reads (returned by the SET STATISTICS IO ON statement in above batch) for the query against the dbo.AssertLookupUnique we get these numbers:

Table 'AssertLookupUnique'. Scan count 3, logical reads 10, physical reads 0, read-ahead reads 0, lob logical reads 0, lob physical reads 0, lob read-ahead reads 0. Table 'AssertBigFact'. Scan count 3, logical reads 143712, physical reads 0, read-ahead reads 0, lob logical reads 0, lob physical reads 0, lob read-ahead reads 0. Table 'Worktable'. Scan count 0, logical reads 0, physical reads 0, read-ahead reads 0, lob logical reads 0, lob physical reads 0, lob read-ahead reads 0.

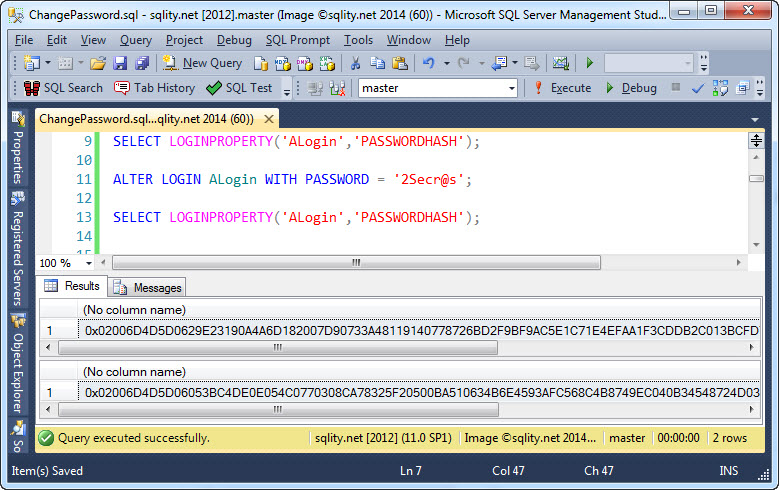

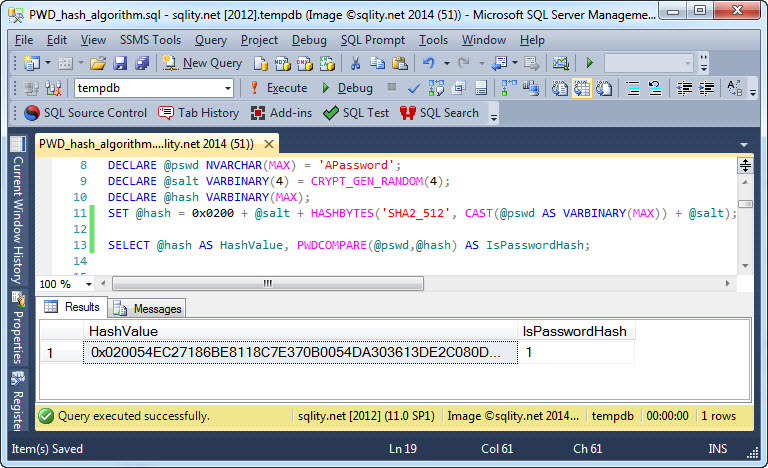

The dbo.AssertLookupUnique has 3 pages as a quick check against sys.dm_db_index_physical_stats confirms:

i.name ,

ips.index_depth ,

ips.page_count

FROM sys.indexes i

JOIN sys.dm_db_index_physical_stats(DB_ID(), NULL, NULL, NULL, NULL) ips

ON ips.object_id = i.object_id AND ips.index_id = i.index_id

WHERE i.index_id < 2

AND i.object_id IN ( OBJECT_ID('dbo.AssertLookupNonUnique'),

OBJECT_ID('dbo.AssertLookupUnique'),

OBJECT_ID('dbo.AssertBigFact') );

[/sql]

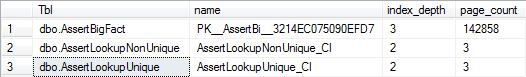

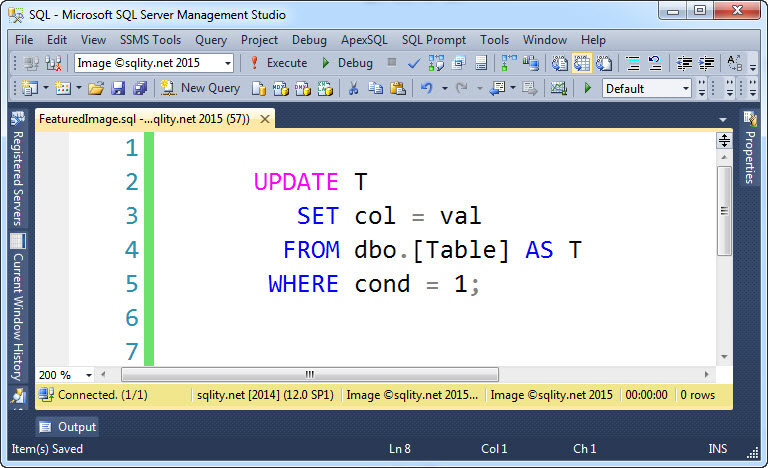

This query returns these numbers:

The 10 logical reads are caused by the parallelism of this query. The different threads of a single query often read pages that another thread has looked at before. The same behavior you can see with the dbo.AssertBigFact table where also a few of the 142858 pages got read more than once.

If we now look at the numbers for the query against the dbo.AssertLookupNonUnique table we get a quite different picture:

Table 'AssertLookupNonUnique'. Scan count 1000000, logical reads 2004000, physical reads 0, read-ahead reads 0, lob logical reads 0, lob physical reads 0, lob read-ahead reads 0. Table 'AssertBigFact'. Scan count 3, logical reads 143760, physical reads 0, read-ahead reads 0, lob logical reads 0, lob physical reads 0, lob read-ahead reads 0. Table 'Worktable'. Scan count 0, logical reads 0, physical reads 0, read-ahead reads 0, lob logical reads 0, lob physical reads 0, lob read-ahead reads 0.

While the number of reads against the dbo.AssertBigFact table is about the same as before, there are over 2 million logical reads recorded for the dbo.AssertLookupNonUnique table. — What a difference...

The seek against the dbo.AssertLookupNonUnique was executed one million times, once for each row in the dbo.AssertBigFact table. As the index depth is 2 levels, each seek had to look at two pages – two million in total.

Conclusion

As you have seen in the above example, requiring an assert can be quite expensive. While the Assert Operator is not that expensive in it self, SQL Server is severely restricted in the plan choices it can make when an assert is logically required.

To avoid situations like this you should always try to give the Optimizer as much information as possible. That includes keeping statistics up to date and using proper indexes. But, as this article has shown, creating an index on the right columns is not necessarily enough. So, if you have an index on a column with unique values, make sure to declare it as unique.