How to Overflow a BLOB Value

Microsoft advertises that you can store 2 gigabytes in a single BLOB value. But, what does this number actually mean? If you buy a 2TB hard drive, you might actually only get 1.8TB of usable space. The same picture presents itself when you are looking at thumb drives or memory cards for your camera. This is in part due to the fact, that some people call 1000 bytes a kilobyte and some people call 1024 bytes a kilobyte. The same factor applies then to get from a kilobyte to a megabyte and again to get to a gigabyte and from there to a terabyte. The difference at the terabyte level is 1024^4 – 1000^4 = 1,099,511,627,776 - 1,000,000,000,000 which is close to 100 gigabytes. But there are also other factors at play that hide behind terms like "raw-storage".

With all that storage size fuzziness in the industry, I thought it might be interesting to figure out how much room there really is in a SQL Server BLOB.

BLOB Explained

BLOB stands for Binary Large Object. Different database systems have different definitions of how large a value has to be to qualify as a BLOB value. In SQL Server, This is really more due to the data type than to actual value size. However, the largest value you might encounter that is not a BLOB is 8000 bytes in size.

The data types in SQL Server that allow for BLOB storage are VARCHAR(MAX), NVARCHAR(MAX) and VARBINARY(MAX). You might also still encounter the deprecated TEXT, NTEXT and IMAGE legacy data types.

Technically, only the VARBINARY(MAX) data type allows to store a BLOB, as that acronym is based on the word "binary". Sometimes you will find the character large objects specified as just LOB values. However, the term BLOB has been established to include any large value stored in one of the above data types.

Testing the Two Gigabyte Boundary

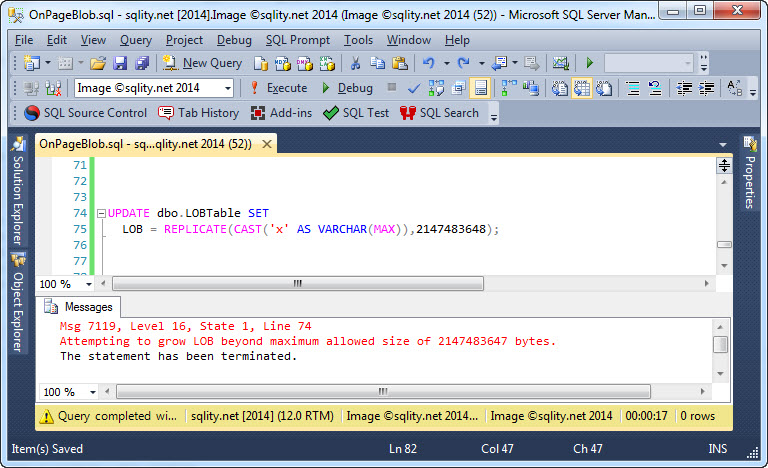

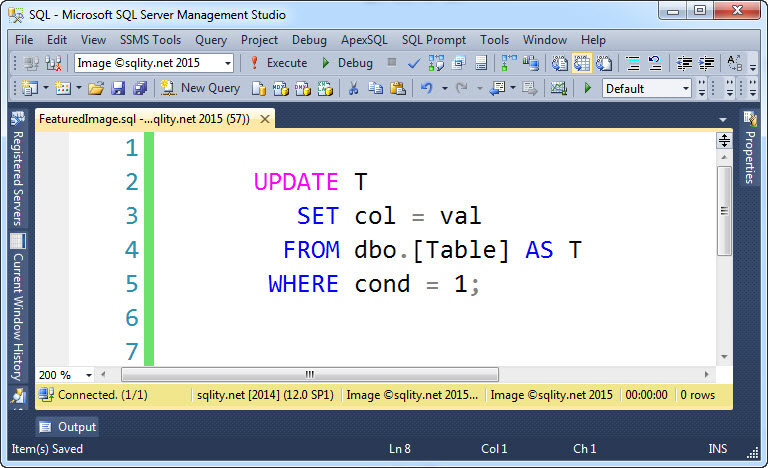

The most generous definition of two gigabytes is 2*1024*1024*1024 = 2,147,483,648 bytes. Let us try to create a value that size. I already created a simple table with a VARCHAR(MAX) column and inserted a single row. With the following statement, I am going to attempt to store a 2147483648-character string in that column:

LOB = REPLICATE(CAST('x' AS VARCHAR(MAX)),2147483648);

[/sql]

The statement does not have a WHERE clause as there is only a single row in that table right now. Running this statement results in . . . . . . an error:

Apparently, 2 gigabytes is too much to be stored in a SQL Server BLOB data type. However, if you look at the error message, you see that we were not far off after all. The maximum number of characters allowed is just one character less. Let us try to confirm that:

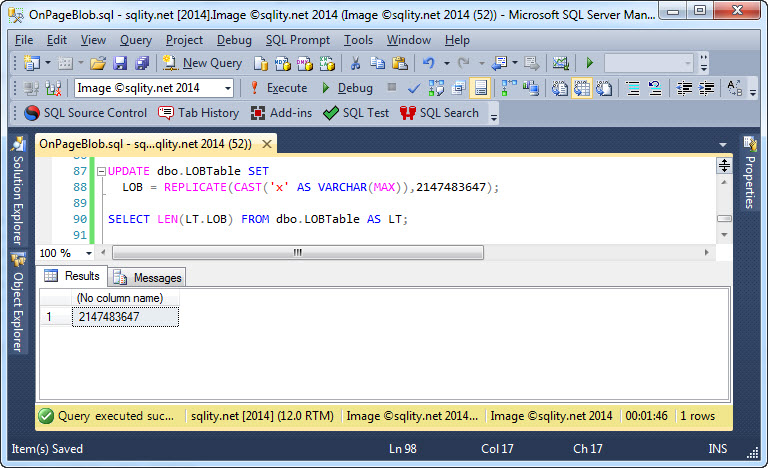

LOB = REPLICATE(CAST('x' AS VARCHAR(MAX)),2147483647);

SELECT LEN(LT.LOB) FROM dbo.LOBTable AS LT;

[/sql]

This time we are also going to determine the length of the stored string after the update, just to make sure we did not lose any characters somewhere mysteriously. The result is very much promising:

The reason for this single missing byte is a performance optimization.

The Missing Byte

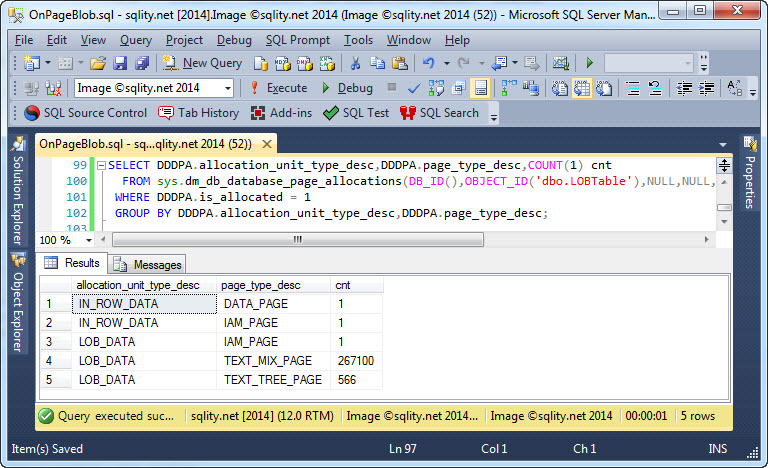

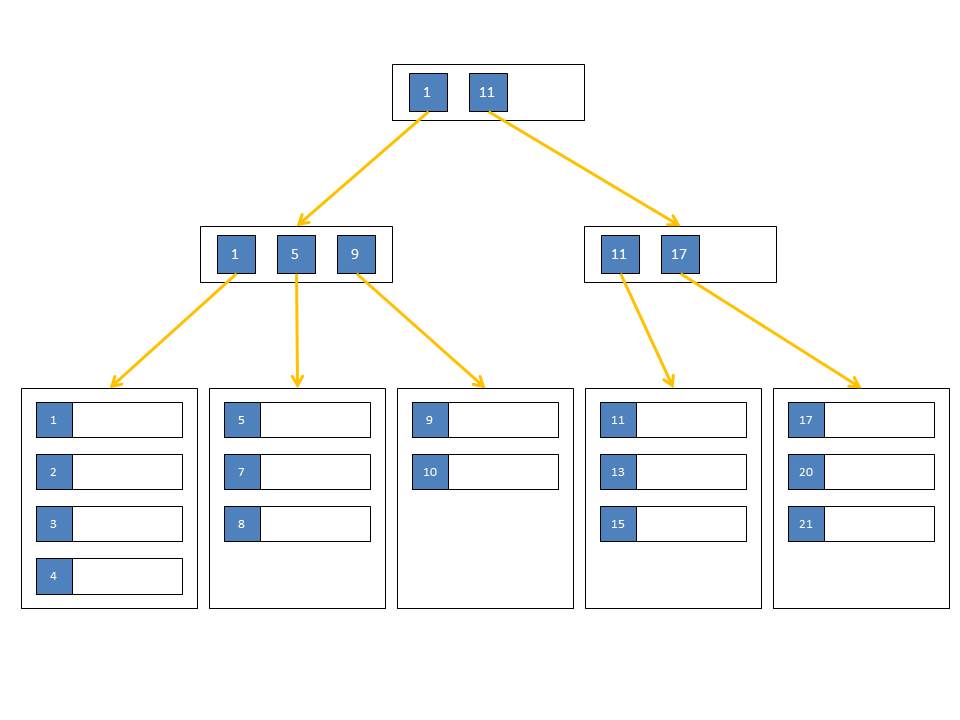

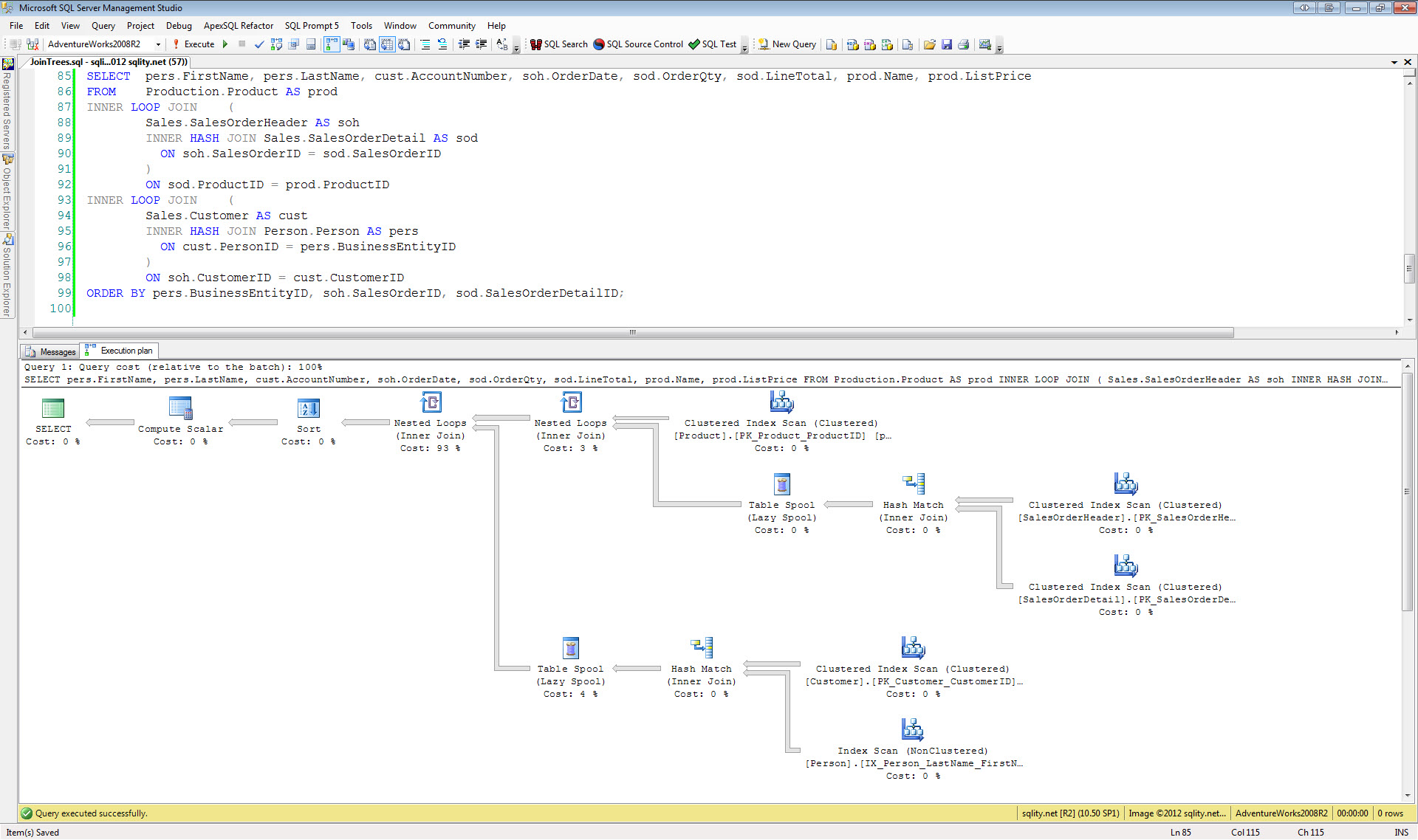

You might know that SQL Server stores every BLOB value in its own B+Tree index. That allows SQL Server to access any sub-string directly without having to read the entire value into memory. If you look at the number of pages required to store our 2147483647-byte value on disc, you see that there are 566 additional TEXT_TREE_PAGES that make up the B+Tree:

The actual data is stored in 267100 pages of type TEXT_MIX_PAGE - about 8044 bytes per page.

The index key for this B+Tree index is the position of the character within the string and is expressed as a single INT value. That means that the highest position that this index can reference is the highest number that can be stored in an INT.

SQL Server does not know unsigned integers, so the largest positive value that can be stored in an INT is 2^31-1 or 2,147,483,647. Therefore, SQL Server can only allow that many bytes in a single BLOB value.

Summary

SQL Server is marketed to allow 2 gigabytes of data to be stored in any of its three BLOB data types. The actual number of bytes that can be stored is 2^31-1 = 2,147,483,647. Not coincidentally, this is also the largest number that can be held by the INT data type.

2 Responses to How to Overflow a BLOB Value