A Working Table Compare Approach

A while back I wrote an article about the underestimated complexity of a table compare algorithm. At the time I did not show a way of how to actually implement such an algorithm.

Over the last couple of weeks I ran into several blog post that lead me to introduce a way to write one now...

The goal is to write a stored procedure, that takes the names of two tables as input and identifies all the rows that are in the one table, but not in the other. Both tables need to have the same schema. A primary key is not necessarily defined, which means that there is the potential for duplicate rows in the tables.

The first Idea that comes to mind is, to somehow compare each column of the one table with the matching column in the other table. This needs to take into account, that both values could be NULL which should be considered a match. It needs to somehow keep track of rows it matched already to not use a row more than once matching against to separate but identical rows in the other table. It also needs to automatically generate the code to do this compare as the tables where passed in as parameters. I actually have implemented this solution, and believe me, it is plain ugly.

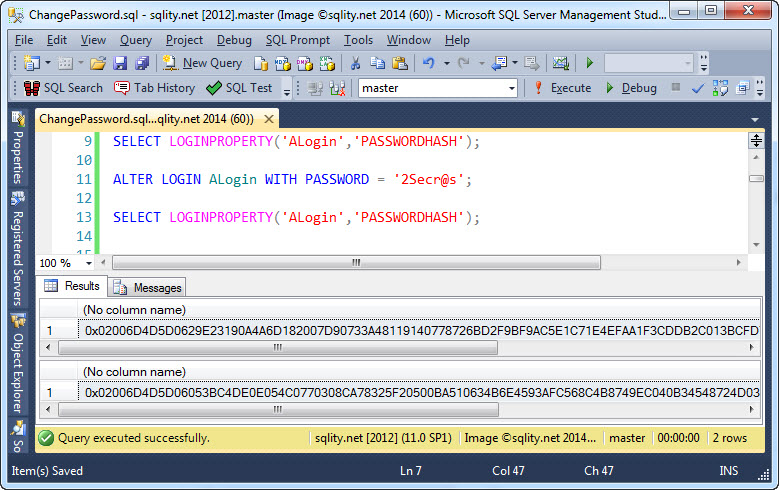

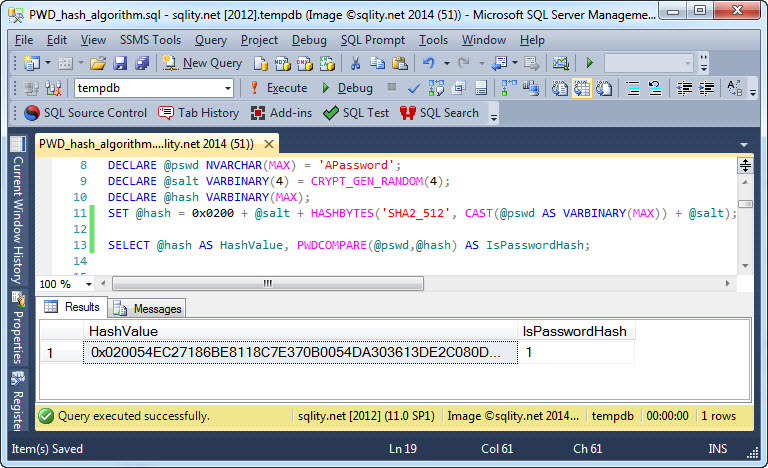

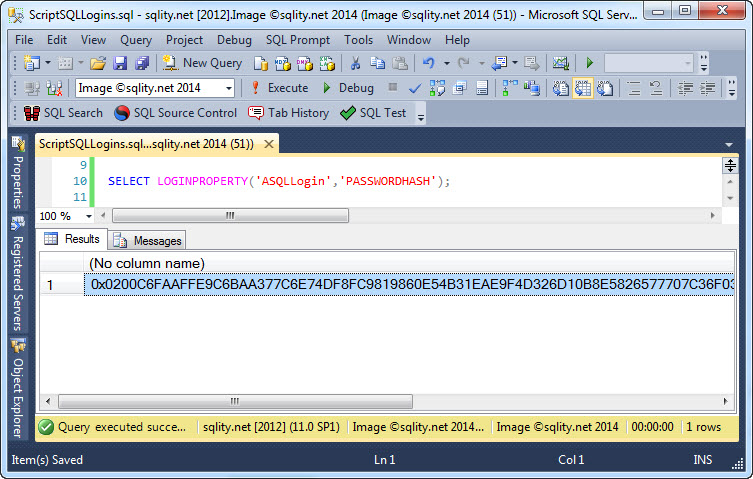

Last week Tony Rogerson wrote about detecting changed rows in a trigger. He is suggesting to concatenate all the column values of each single row together an then use the HashBytes function to calculate a hash value as a row value indicator that then can be used to compare rows to each other easily. Using a hash value for comparisons is an industry standard and is also used in several places in the SQL Server engine.

However, it is not unproblematic to do such a data compression before a comparison operation. He himself points out, that HashBytes has an input length limitation of 8000 bytes and suggests to use the CHECKSUM function instead. The CHECKSUM function returns an integer. That means you can get only 2^32 = 4,294,967,296 different values. While that sounds like a large number, it really is not.

In his blog entry The curious case of the Dubious Deadlock James Rowland-Jones gives a very good explanation about what can happen if you get to comfortable with hash values. Make sure to also check out the follow-up post to this, in which Remus Rusanu explains, why 281,474,976,710,656 is a small number.

So, what can we do about this? One option is to not calculate a checksum in the first place and just compare the concatenated column values to each other. This should not be slower that first calculating a checksum over the strings and then comparing these numbers. But this approach is also not complete. One of the reasons is, that XML is limited in length. While I admit that it is very unlikely that you will find a table with rows that contain more than 2GB of data, it is a restriction that you need to consider when designing a table compare algorithm. Also, if you take the development of hard drive or ram size into consideration, 2GB will not be huge for a long time. The other issue with this approach is, that you will have trouble converting some string or binary values to XML. Most bytes with a value under 30 cannot be converted to XML. To get the full list, run the following code:

|

DECLARE@cmd NVARCHAR(MAX); SELECT@cmd ='DECLARE @x XML;'+(SELECT'BEGIN TRY SET @x = CAST( CHAR('+ CAST(n-1 ASVARCHAR)+ ') AS XML);END TRY BEGIN CATCH PRINT '+ CAST(n-1 ASVARCHAR)+ ';END CATCH;' FROMdbo.GetNums(256) FORXMLPATH('') ); EXEC(@cmd); |

This code makes us of Itzik Ben-Gan's Virtual Auxiliary Table of Numbers dbo.GetNums to produce 256 different possible byte values. Then it tries to convert each of them to XML. If this conversion fails, the number gets printed out. As you can see, there are quite a few that fail.

Also about a week ago Merrill Aldrich wrote about Simple Monitoring for Data Changes, and his solution gets around all the problems that I mentioned above. Almost all, that is.

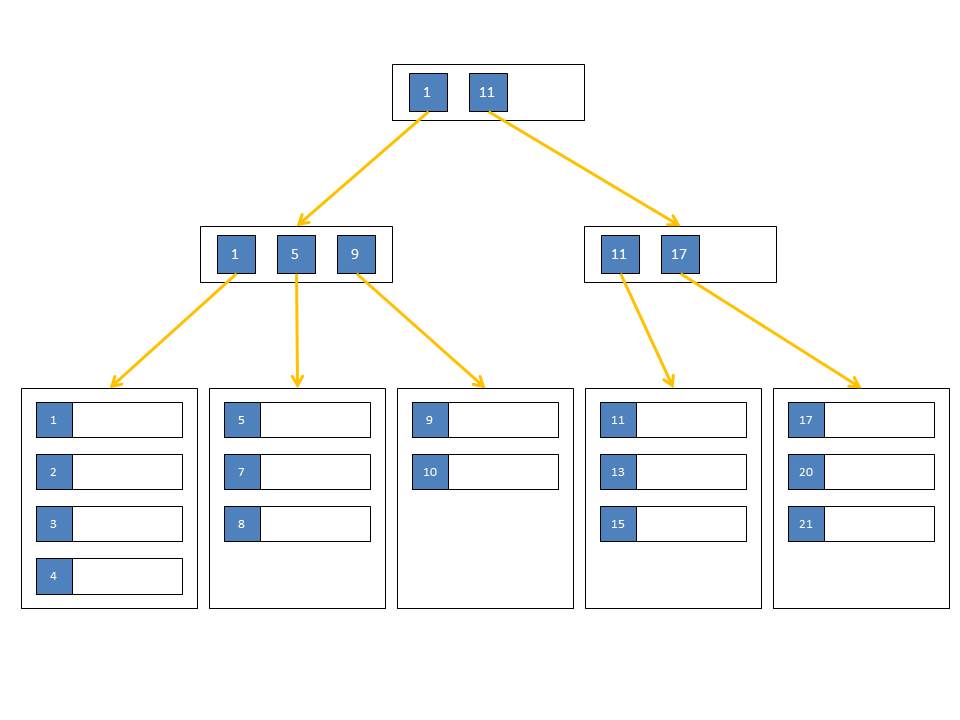

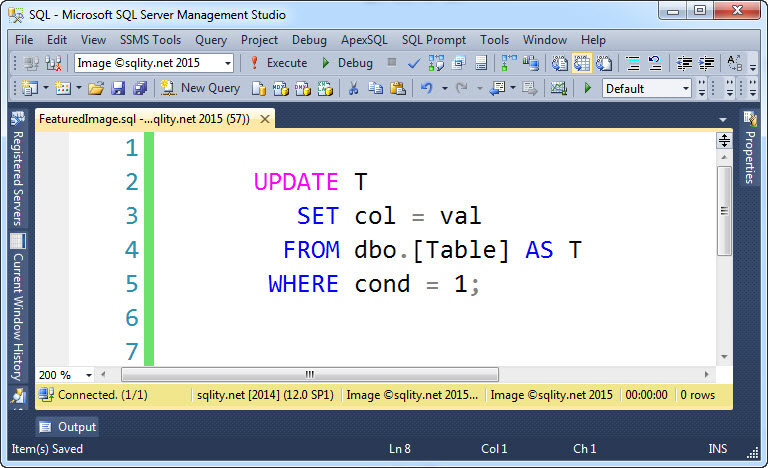

His solution uses the GROUP BY statement to make SQL Server do all the comparisons. This works for extremely large rows and it works for "funky" binary data. But it does not work for TEXT, NTEXT and IMAGE. However, There is an easy fix for that:

|

IFOBJECT_ID('tempdb..#t1')ISNOTNULLDROPTABLE#t1; CREATETABLE#t1(id INT,t TEXT,i IMAGE); GO INSERTINTO#t1 SELECT1,'text',0x1; INSERTINTO#t1 SELECT2,'test',0x2; INSERTINTO#t1 SELECT3,'text',0x2; GO SELECTCAST(t ASVARCHAR(MAX))t,COUNT(1) FROM#t1 GROUPBYCAST(t ASVARCHAR(MAX)); GO SELECTCAST(i ASVARBINARY(MAX)),COUNT(1) FROM#t1 GROUPBYCAST(i ASVARBINARY(MAX)); |

The other thing that Merrill's solution does not handle is key free tables with duplicate rows. But that also can be fixed easily. Let's look at an example:

|

IFOBJECT_ID('tempdb..#t1')ISNOTNULLDROPTABLE#t1; IFOBJECT_ID('tempdb..#t2')ISNOTNULLDROPTABLE#t2; GO CREATETABLE#t1(c1 INT,c2 INT); CREATETABLE#t2(c1 INT,c2 INT); GO INSERTINTO#t1 SELECT1,1; INSERTINTO#t1 SELECT1,1; INSERTINTO#t1 SELECT2,2; INSERTINTO#t1 SELECT3,3; GO INSERTINTO#t2 SELECT2,2; INSERTINTO#t2 SELECT2,2; INSERTINTO#t2 SELECT3,3; INSERTINTO#t1 SELECT4,4; INSERTINTO#t1 SELECT4,4; |

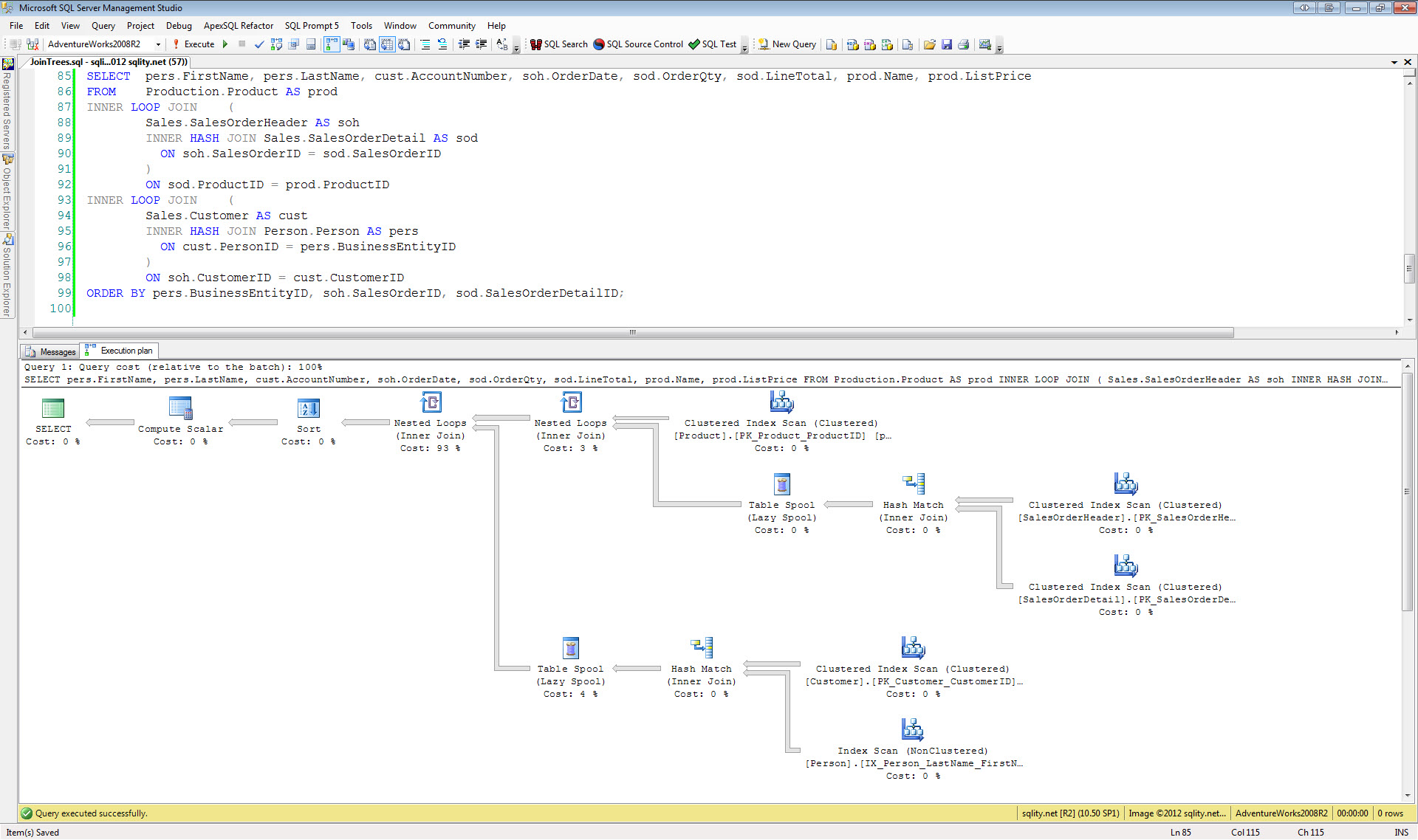

Merrill's generated code looks like this:

|

SELECTMIN(table_name)table_name,c1,c2 FROM(SELECT'#t1'table_name,c1,c2 FROM#t1 UNIONALL SELECT'#t2'table_name,c1,c2 FROM#t2 )allRows GROUPBYc1,c2 HAVINGCOUNT(*)=1; |

It is supposed to return all mismatching rows, but it returns non. To fix this we can just throw a per table count of unique rows into the mix:

|

SELECTMIN(table_name)table_name,cnt,c1,c2 FROM(SELECT'#t1'table_name,COUNT(1)cnt,c1,c2 FROM#t1 GROUPBYc1,c2 UNIONALL SELECT'#t2'table_name,COUNT(1)cnt,c1,c2 FROM#t2 GROUPBYc1,c2 )allRows GROUPBYc1,c2,cnt HAVINGCOUNT(*)=1; |

This now returns all rows that are only in one table and not in the other, as well as all rows that are in both tables but more often in one than in the other. It also returns the number of occurrences per row and table of the mismatching rows.

To make a fully working solution out of this, you have to generate this code dynamically. You can follow Merrill's example for that. Don't forget to incorporate the CAST into one of the ...(MAX) data types for TEXT, NTEXT and IMAGE type columns. The last thing you need to think of are unique column names for the two additional columns. You do not want this solution to break, if you compare tables that already contain a column called "table_name" or "cnt".

This solution fulfills all the requirements that I have for a table compare implementation. It can handle any data type and all data sizes. It performs well. And most importantly it is easy to understand an maintain.