The Unloved Backward Scan

Introduction

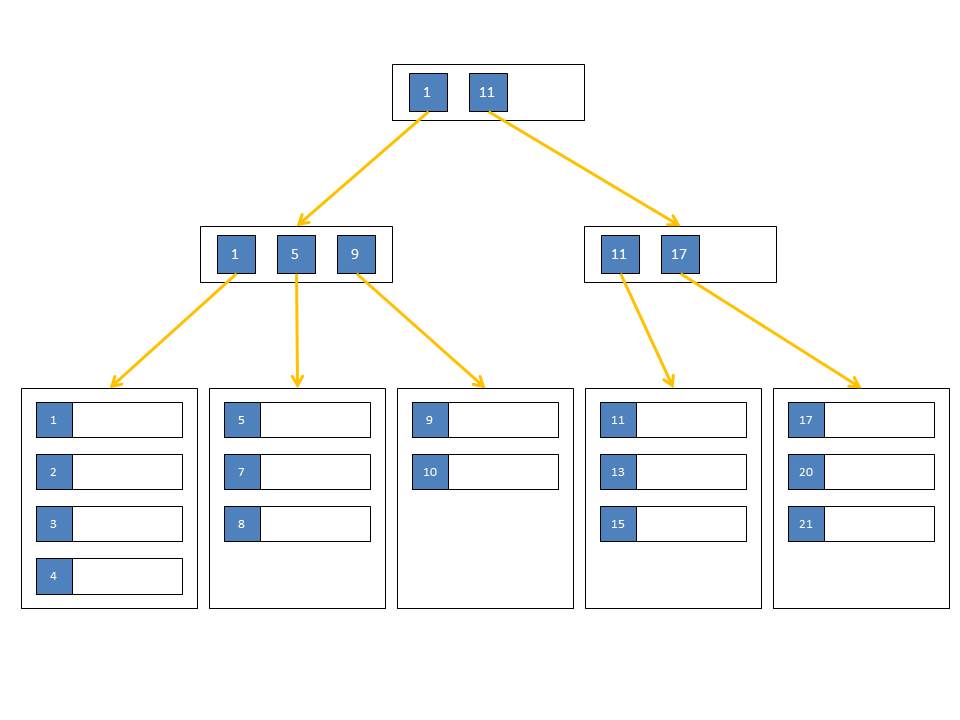

When a query requires rows to be sorted, either directly requested with an ORDER BY clause or because one of the iterators requires it, SQL Server has two options to guarantee that order. The obvious one is to utilize a sort iterator. If the data comes from an index (clustered or covering), SQL Server can also use an "Ordered Scan" of the data. Depending on the requested sort direction, such an ordered scan can be either forward or backward.

This would hardly be worth an article, if there wasn't the peculiarity that SQL Server obviously does not like the idea of having to execute an ordered scan that is directed backwards.

Backward Scan Dislike

Let's look at an example. First we need some tables with data:

IF OBJECT_ID('dbo.T2') IS NOT NULL DROP TABLE dbo.T2;

CREATE TABLE dbo.T1(

Id INT CONSTRAINT T1_PK PRIMARY KEY CLUSTERED,

v1 INT,

c1 VARCHAR(8000)

);

CREATE TABLE dbo.T2(

Id INT CONSTRAINT T2_PK PRIMARY KEY CLUSTERED,

v1 INT,

c1 VARCHAR(8000)

);

INSERT INTO dbo.T1(Id,v1,c1)

SELECT n,CHECKSUM(NEWID()),'*'

FROM dbo.GetNums(1000000);

INSERT INTO dbo.T2(Id,v1,c1)

SELECT n,CHECKSUM(NEWID()),'*'

FROM dbo.GetNums(1000000);

--Get dbo.GetNums here: http://www.sqlmag.com/article/sql-server/virtual-auxiliary-table-of-numbers

[/sql]

This script is creating two tables containing one million rows each. The v1 column is filled with random values; the c1 column just contains a single constant character. The Id column is the clustered primary key and it is providing the ordering on disk that we are going to use.

The query is a little made up. It joins T2 to itself bringing back only one set of columns. It also restricts the result of this join to the first one million rows, sorted by T2.Id. The one million values in T2.v1 are random from a set of 4 billion making them "mostly" unique. That means the self-join is expected to return about one million rows anyway. Those rows are then joined to T1. In the end the ORDER BY requests that all rows be returned sorted by the Id column of the T1 table:

FROM dbo.T1 A

INNER JOIN ( SELECT TOP ( 1000000 )

B.*

FROM dbo.T2 B

INNER JOIN dbo.T2 C ON B.v1 = C.v1

ORDER BY B.Id

) BC ON A.Id = BC.Id

ORDER BY A.Id;

[/sql]

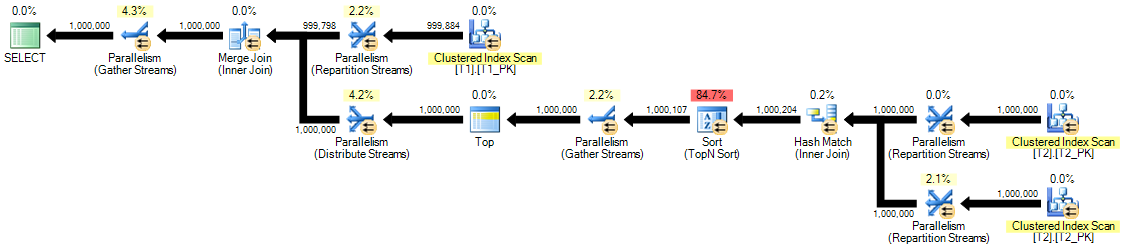

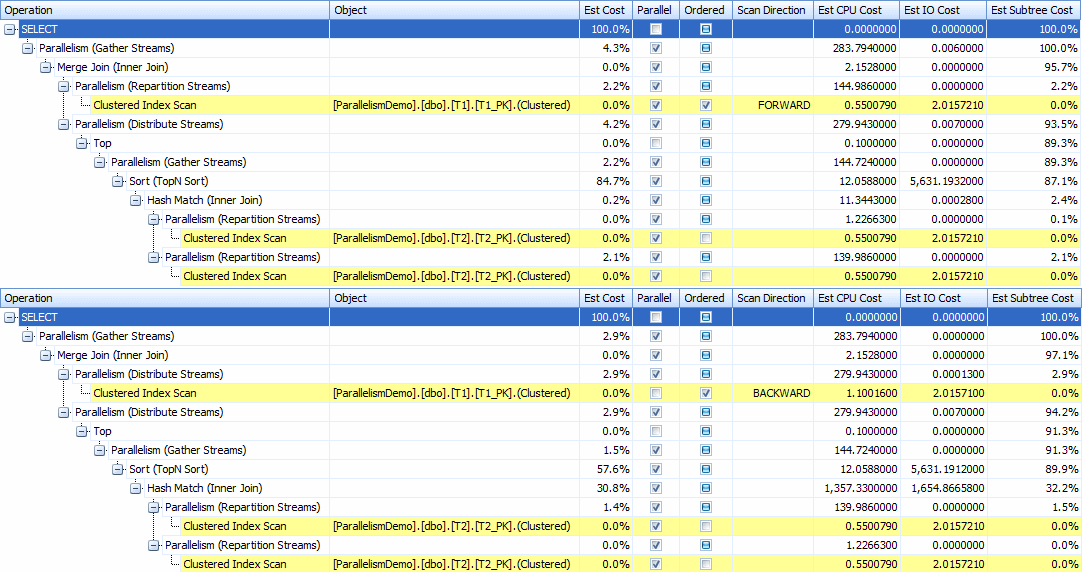

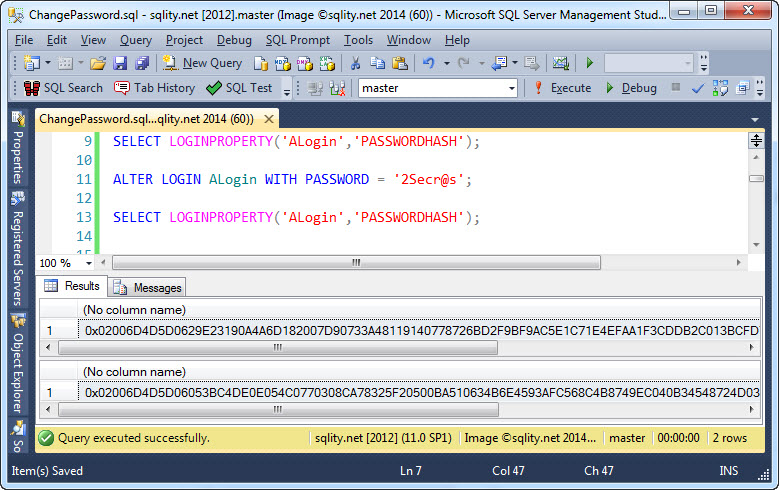

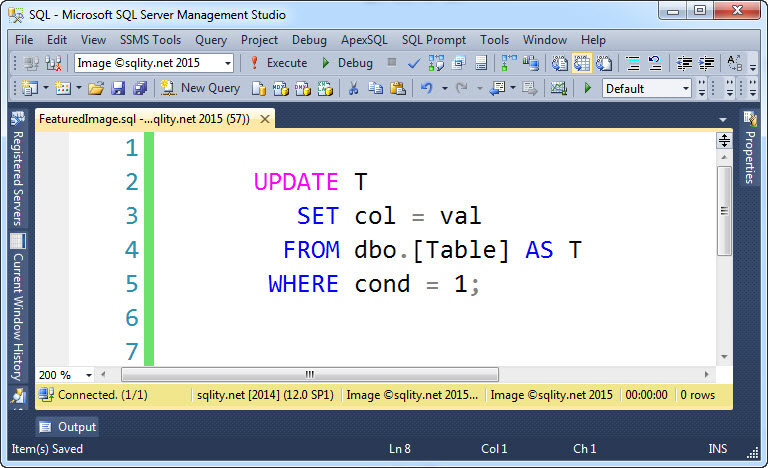

The execution plan of this query looks like this:

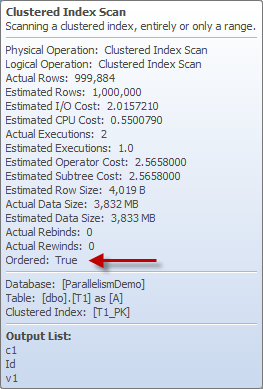

There is nothing unexpected in this plan. The T2 self-join is done with a hash join operator, as there is no index on the v1 column. The data is then sorted by a sort operator and passed through a serial zone for the top operator. After that there is no additional sort operator for the data to pass through. As the merge join requires both streams to be sorted the same way on the join column, this means that the data must be produced sorted by the scan of the T1 table. A quick look at its properties confirms that:

This scan is a forward scan, as both ORDER BY statements are asking to sort by Id ascending. To make this scan go backwards we should just have to change the query to sort by Id descending in both places:

FROM dbo.T1 A

INNER JOIN ( SELECT TOP ( 1000000 )

B.*

FROM dbo.T2 B

INNER JOIN dbo.T2 C ON B.v1 = C.v1

ORDER BY B.Id DESC

) BC ON A.Id = BC.Id

ORDER BY A.Id DESC;

[/sql]

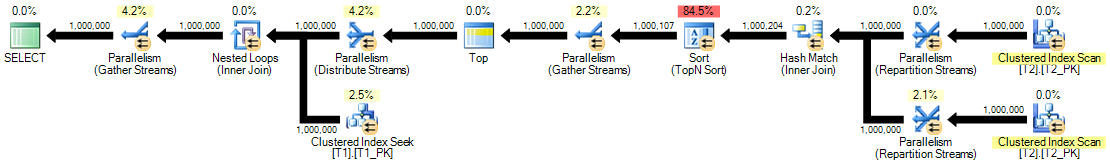

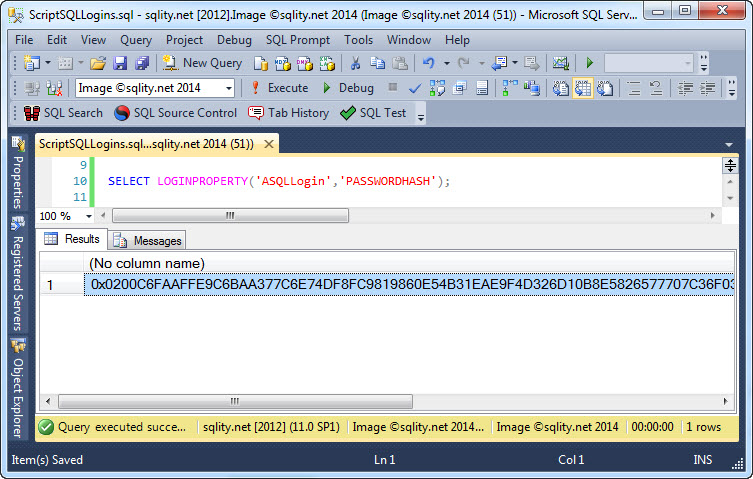

However, this query produces this rather unexpected plan:

A loop join with an index seek against the T1 table to retrieve 1,000,000 single rows – that cannot be good.

Before we look at execution statistics let's force the merge join back by specifying a join hint:

FROM dbo.T1 A

INNER MERGE JOIN ( SELECT TOP ( 1000000 )

B.*

FROM dbo.T2 B

INNER JOIN dbo.T2 C ON B.v1 = C.v1

ORDER BY B.Id DESC

) BC ON A.Id = BC.Id

ORDER BY A.Id DESC;

[/sql]

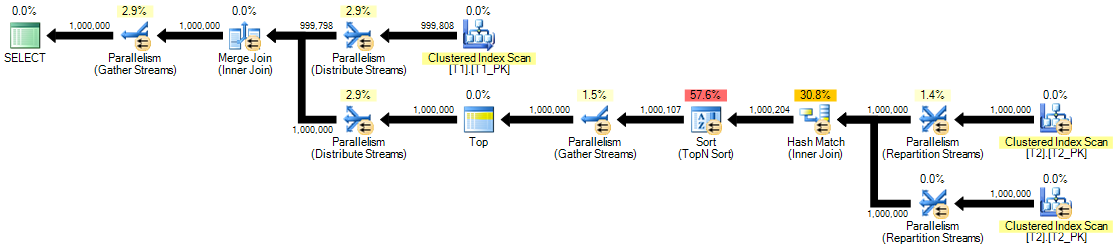

Now we get the expected plan again:

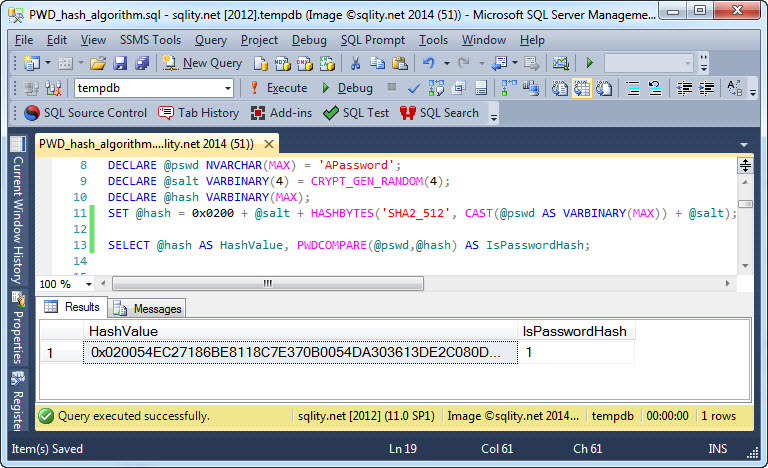

If you compare the properties for the iterators in the forward scan and the backward scan plan you will notice, that the cost of the two scan directions is not that much different:

The estimated cost of the scan iterator is 0% in both cases. The bulk of the work is done in the hash self-join and the following sort of the T2 table.

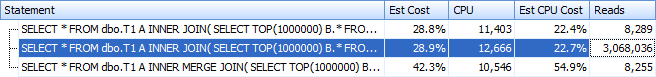

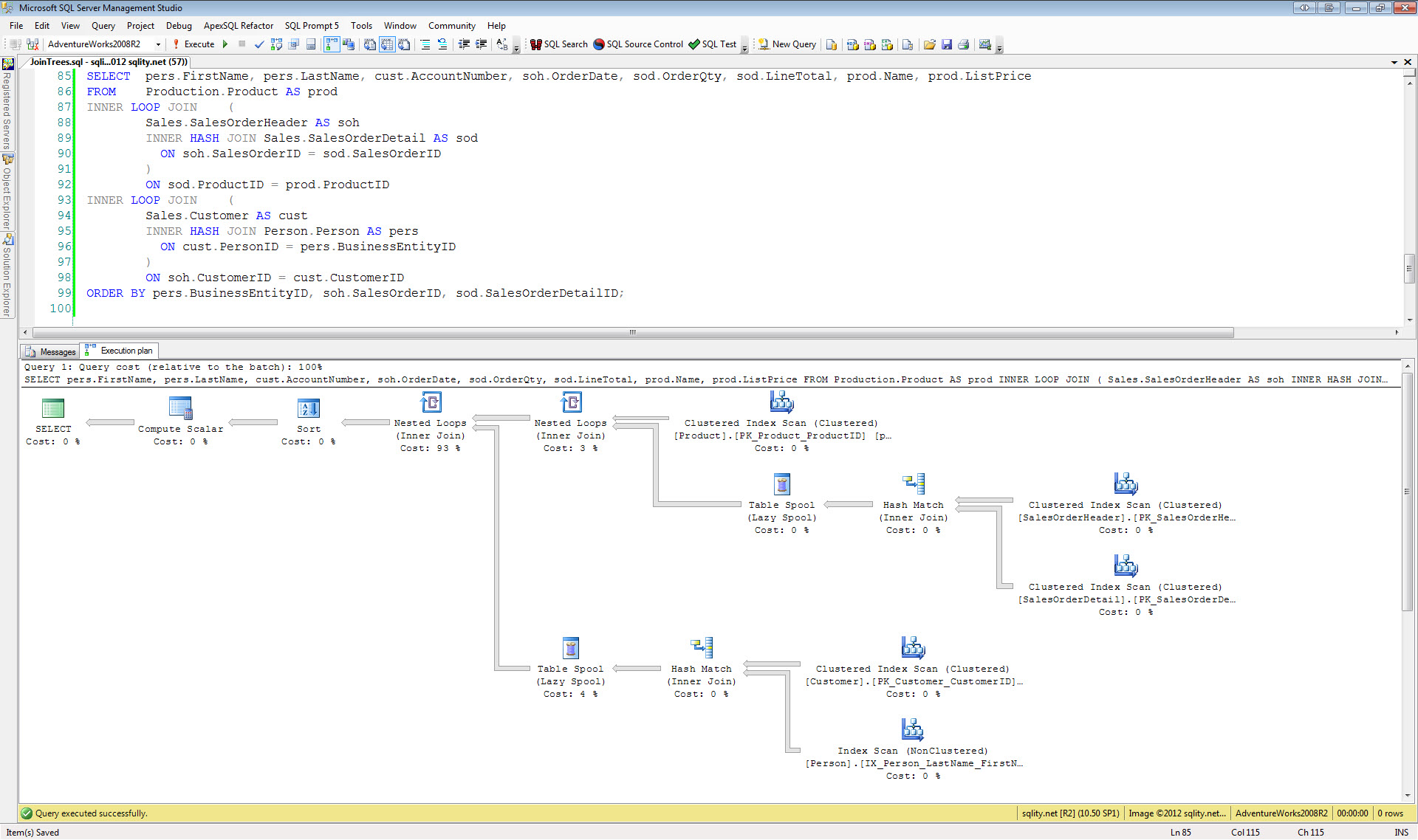

So, let us take a look at the execution statistics of all three queries:

The execution statistics clearly show that the decision to go with a loop join was not necessarily the best. While the estimated cost for the backward scan is about 150% of the estimated cost for the loop join, the actual reads for the loop join version are about 3 million, which is more than 250 times higher than the 8000 reads for either scan direction.

Disk Access

To understand were this dislike is coming from we need to look at the access pattern necessary to retrieve the data from disk. A forward scan of a table (or index), that is not heavily fragmented, looks pretty much like doing a single contiguous read of a big blob of data. SQL Server has a lot of performance optimizations build in that make this type of access as fast as possible. The Read-Ahead mechanism is a good example for that.

A backward scan on the other hand looks quite the opposite: After reading a page, the disk has to do almost a complete turn to get to the previous page, which is the next one in line to be read. This is about the worst kind of random access you can come by.

As SQL Server always assumes that none of the requested pages are in cache, the decision to not get into that backward spinning game seems quite understandable.

Also, if you closely compare the two "scanning" execution plans you will notice that while the forward scan iterator is parallelized, the backward scan operator is executed single threaded. This is a general rule: SQL Server cannot execute a backward scan in parallel. That makes sense as with all that waiting for the disk to do another spin to get to the "next" page, it is unlikely for the data to come in quickly enough to keep multiple threads busy.

Estimates?

But wait, there is one more thing: If you look at the image above and compare the properties of all iterators in the two scanning plans, you will notice something odd. The estimated CPU cost of the "hash match inner join" iterator goes from 11 in the "forward" case to 1357 in the "backward" case; the estimated IO cost makes a similar jump from 0 to 1654. That seems to not make a lot of sense, as the next iterator, the sort, is a blocking iterator. "Blocking" means that the iterator reads in all input rows into a holding area before producing any output rows. This implies that anything on the left side of a sort should not be able to influence the cost of operators on the right side of it. That is, unless it affects the number of rows significantly, as this is a TOP N Sort. See TOP N Sort – A Little Bit of Sorting for an explanation. But as in this example all columns are "mostly" unique, the optimizer guesses correctly that about one million rows get passed into the hash join on each input and also about one million rows come out the other end. So row count estimates should not matter here.

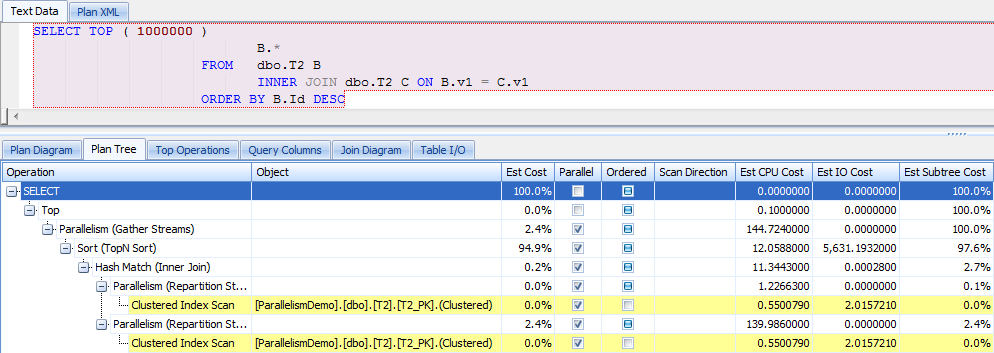

If you look at the estimates when executing just that inner query sorting backwards, you will see that they exactly match the ones in the imbedded forward sorting case:

That means that the scan direction change of the T1 table causes the estimates on the other side of the merge join to get completely thrown off.

Conclusion

SQL Servers dislike of backward scan operations is understandable when looking at the work necessary to retrieve the records from disk in opposite index order. Additionally, a backward scan cannot be executed in parallel. That adds to the list of reasons, why the backward scan seems unloved.

However, the main reason why SQL Server avoids backward scans seems to be that they throw its cost estimations off, making following iterators appear a lot more expensive than they really are.