Test Data – Why Sharing is Not Always Caring

If you have read the first and second of this series about fragile tests, you know that fragile tests can be a huge problem, undermining your unit testing efforts. With this post, I am continuing the series by looking at a problem common to database tests: shared test data.

Why We Test

When investing time into developing unit tests for our products we probably have a few obvious reasons to do so. You might test your code to produce a high quality product (and with it happy customers). You might test to reduce the number of coding mistakes you make. You might test because you know that it will lead to more flexibility when implementing changes down the road.

These are all fairly obvious reasons. However, they all have one underlying and not quite so obvious driver: time - your time to be exact.

Writing unit tests is an investment. This investment has a cost. That cost can be measured in development time. In business (and even outside of a business setting), any investment needs to make sense, and the "does this make sense" factor is usually described as ROI (Return On Investment). An investment makes sense if it has a positive ROI. As we have decided to measure the investment in time, it is appropriate to measure the ROI in time as well. So, in plain English, unit testing your code makes sense if it saves development time.

Unit tests are actually quite good at saving us time: For example, a good unit test suite reduces the time that it takes from you making a coding mistake to you discovering that something went wrong. Because you just made the change, it will take you significantly less time fixing the problem. Other time saving advantages to unit tests include the greater flexibility and confidence that allows us to keep up with new or changing requirement more easily.

The Problem with Shared Test Data

A good unit test suite saves us time. However, a bad unit test suite easily can add additional time to all future development. One form of fragile test is particularly nasty in this regard: A test that requires to be changed every time a new (unrelated) requirement comes about.

If you have many of those high maintenance tests, you end up spending most of your time fixing and rewriting tests. That quickly leads to frustration in the development team and will likely cause the unit testing effort to be declared a failure.

So, what does that have to do with shared test data, you ask - a lot, actually: Shared test data is the most common cause for high unit test suite maintenance costs, which in turn causes unit testing projects to be abandoned. In short:

Shared test data causes unit testing projects to fail.

The reason is simple. But before I explain, let me first define what I mean with shared test data.

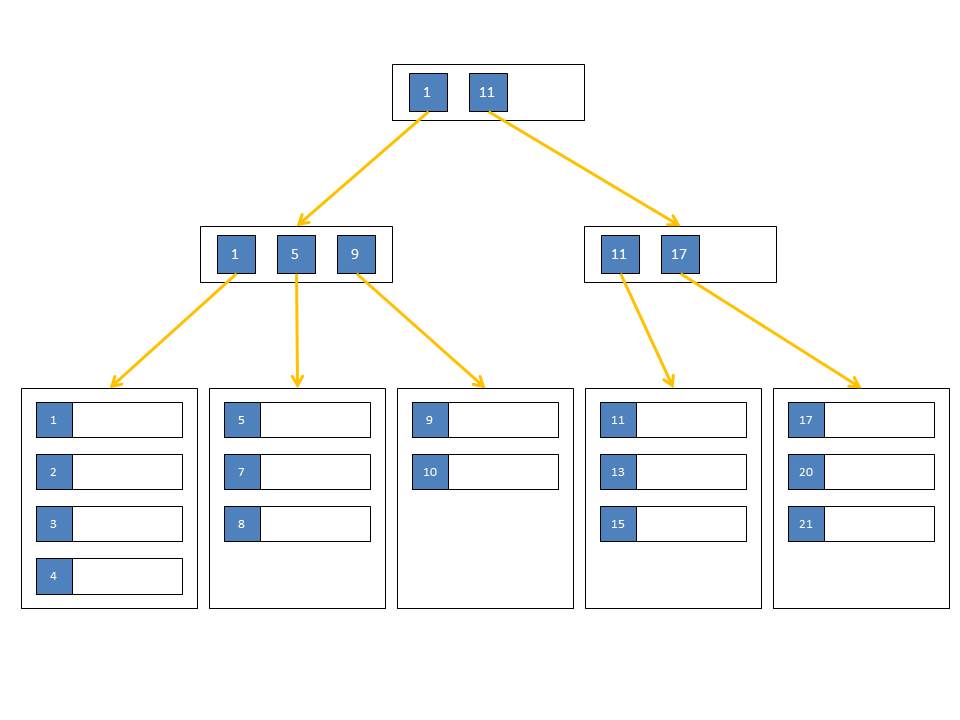

The same factors that let us call a database (in an RDBMS environment) well designed, make it particularly hard to test. Every constraint, from the simple data type (yes, that is a constraint) to more complex foreign key (FK) or check constraints seems to be there only to prevent us from inserting adequate test data. Let us look at an example in the AdventureWorks database. If I have to test a module that counts sold quantities per ProductID, I need to insert a few records into the Sales.SalesOrderDetail table. That is likely the only table accessed by this module. However, because of a well-designed constraint system I need to add an entry in the Sales.SalesOrderHeader, the Production.Product and the Sales.SpecialOffer tables, before I can insert a row into the Sales.SalesOrderDetail table. Those three tables together have a total of six foreign key constraints that require rows to exist in additional tables. That keeps on going until almost every table in the database seems to contain rows. In fact, there are 45 foreign keys defined on the Sales.SalesOrderDetail table and its direct ancestors. Granted, not all of them are required, as some of the columns are nullable, but you are still looking at a substantial amount of work. And remember, all we wanted to do is insert a few rows into the Sales.SalesOrderDetail table.

The most obvious solution to this is to pre-populate all tables with a good set of test data. With that in place, we can skip the setup in our tests and just construct the assertion section to expect results based on the existing data. The test data we could even auto-generate, so we do not have to think about it at all. This type of test data is what I call "shared test data". It has plenty of benefits - at first glance. However, once you dig deeper, the disadvantages clearly outweigh the benefits.

Life usually goes like this: After you have used that test data in a number of unit test, a new feature request comes in. Looking through the existing test data, none of the records in the Sales.SalesOrderDetail table match what is needed for this new feature. So a new record is quickly inserted. You might have to also add a few records into the other tables that the 45 FKs are pointing at, but this is still easy enough. But now, when you rerun your tests, you notice that most tests that access any of the changed tables suddenly fail. All the effort you put into coming up with their expected outputs is invalidated, just because you needed to add a single additional row to the database.

You can see where that is going. Change is supposed to be easy with unit tests, but shared test data makes it actually quite hard. Shared test data also makes it hard to read the tests, as you have to dig into the database tables to see the test data before you can understand what the test is trying to assert. In addition, shared test data makes some tests impossible to write. Think about testing the behavior of your module against an empty table. Or testing a behavior dependency on the number of rows in the table. For example, you cannot have an even and an odd number of rows in a table at the same time. Finally, shared test data tends to inflate the total amount of test data, which in turn makes the entire test suite run slower.

How to Avoid Shared Test Data

Shared test data is bad. But, what can we do about it?

The answer to that question is isolation. Isolation is a common unit testing technique in the object-oriented (OO) world. Isolation in a unit test is achieved by replacing secondary objects with test doubles. If the module I am testing makes use of another object, the test does not care about how that object does its magic. It only cares about the object being accessible in a specific way. For example, the module under test might use the foo method of the secondary object and expect a string to be returned. In a test, we can replace that object with a test double, as long as the double also has a foo method that takes the same parameters and returns a string. The foo method of the test double can return a hard-coded string, which makes writing and reading the test a lot easier. This process of replacing secondary objects in a test is commonly called "mocking". The test doubles are then called "mock objects".

In the database world, procedures often make use of other procedures or functions. After reading above paragraph, it might feel like a good idea to replace those secondary SQL modules with test doubles in SQL unit tests. In fact, that is a great idea. It has very similar benefits to mocking in OO development. In tSQLt you can use tSQLt.SpyProcedure and tSQLt.FakeFunction to achieve this easily.

Faking other SQL modules does not yet help us to get away from shared test data. However, if you think about it, a table is also just a secondary object. The SQL module does not care when accessing a table, if that table is the original, or if it is a replacement - a fake table - that looks identical. In fact, when reading data, the module does not care if the table has constraints defined on in either. When writing data, the matter is not quite as obvious, but a unit test should not rely on that constraint being there either. That all means, that we can safely replace all tables accessed by the module under test with fake tables.

In tSQLt, replacing a table within a test with an equally looking fake requires the use of the tSQLt.FakeTable procedure. Staying in AdventureWorks, let us look at an example:

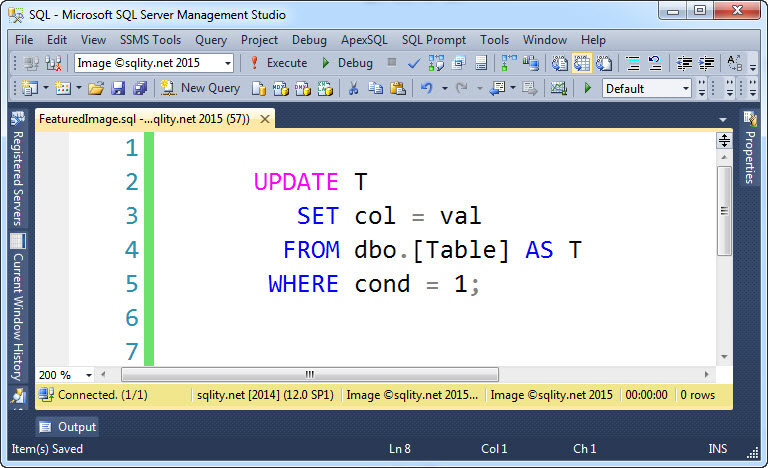

BEGIN

EXEC tSQLt.FakeTable @TableName = 'Sales.SalesOrderDetail';

INSERT INTO Sales.SalesOrderDetail(ProductID,OrderQty,LineTotal)

VALUES(42,7,77.60),(42,2,12.38),(42,1,3.02);

SELECT ProductID, TotalQty, TotalValue

INTO TotalSalesByProductTests.Actual

FROM Reports.TotalSalesByProduct;

SELECT TOP(0) *

INTO TotalSalesByProductTests.Expected

FROM TotalSalesByProductTests.Actual;

INSERT INTO TotalSalesByProductTests.Expected

VALUES(42,10,93.00);

EXEC tSQLt.AssertEqualsTable 'TotalSalesByProductTests.Expected','TotalSalesByProductTests.Actual';

END;

[/sql]

The call to tSQLt.FakeTable at the beginning of the procedure automatically moves the existing table out of the way to then create a new table that looks just like the original. However, while data types are preserved, no other constraints (including nullability and foreign key constraints) are replicated to that new table. As the table is created from scratch, it starts out with now rows in it (even if the original table did contain rows). That allows us to concentrate on (and then insert) just the data needed for our test.

The above example is testing the view Reports.TotalSalesByProduct. The test tells us that it has (at least) three columns: ProductID, TotalQty and TotalValue. To determine those values, the view needs to look at only three columns of the Sales.SalesOrderDetail table: ProductId, OrderQty and LineTotal. The view has no need to be concerned with things like the SaleOrderID or the ModifiedDate, so the test should not be concerned with them either.

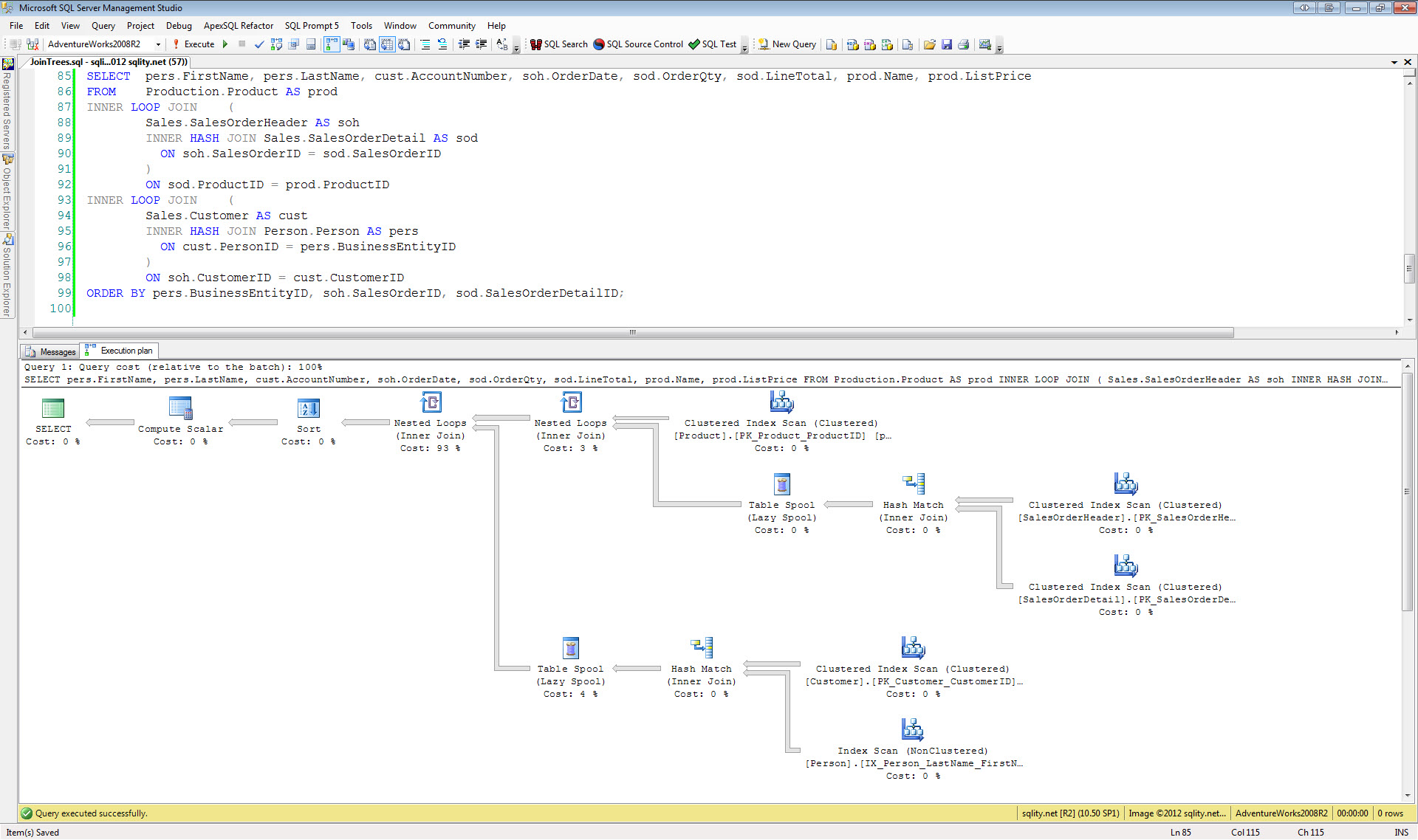

For the same reason, we should not have to deal with entries in the Sales.SalesOrderHeader, Sales.SpecialOffer or tables. The code we are testing is not accessing either of those tables, so having rows in there is not going to change the behavior of the tested code. (It might cause a change in the execution plan SQL Server is selecting, but it will not change the result.)

Assigning values to these unused columns or adding rows to those irrelevant tables just adds noise to the test that makes it harder to understand what is going on. In addition, having this unnecessary data within your tests makes it more likely for tests to have to change when an unrelated feature is modified.

Remember, our goal is to save time. If the test fails, we want to be able to assess quickly what the test is trying to prove, so that we can fix the out-of-line behavior. Any unnecessary clutter in the test code will slow us down during writing of the test and during reading of the same later on. Any unrelated failures will cause tensions and frustrations among the development staff.

Final Thoughts

Do not rely on shared test data when writing unit tests. Instead, operate with a concise amount of data tailored to each test and set it up within the test. That makes tests easier to write, to read and to maintain. It also significantly reduces the chance of a change to an existing feature affecting large numbers of unrelated tests.

The main goal of a unit test is to confirm a specific behavior of the code. That can often be achieved with just a few rows of data. Always remember:

A unit test test's goal is to assert behavior of a single unit, not of the entire system.

There are other types of test needed when developing software, including performance tests, security tests, usability tests and integration tests. Unit tests cannot and should not be used to cover all of these areas. Therefore, unit tests usually require only very little test data.